Can AI Governance Tools Close the AI Governance Gap?

Tools are more often the scapegoat than the issue when MSPs encounter business problems. AI governance may be an exception.

Broadly speaking, when an MSP has a business problem, tooling is more likely to be the excuse than the culprit. It’s not my poorly designed workflows, my spotty documentation, my loosely trained techs, or what have you. It’s my damn tool stack.

As I said, though, that’s usually the case, not always. Sometimes tools really are part of the problem. Maybe AI governance is one of those cases.

You’ll recall that I wrote in dismay recently about the yawning gap between how badly businesses need AI governance services and want to get them from MSPs on the one hand and the relative scarcity of MSPs eager to provide those services on the other, and then podcasted in dismay about the same topic a few days later live from the RSA Conference in San Francisco. The biggest explanations I pointed to for that state of affairs were that a) many MSPs don’t yet grasp AI’s governance dangers, and b) those same MSPs are too busy trying to think through AI basics to get into AI governance.

But tooling, in fairness, is a barrier too.

There are plenty of data loss prevention tools on the market, notes Leeron Walter, vice president of marketing at DLP and insider risk management vendor Teramind, but almost all of them were written well before users within and across companies began exchanging data with chatbots and agents.

“There are gaps in visibility, and it might be much easier for an employee with non-malicious intent to accidentally leak information and data,” Walter notes.

Legacy governance solutions, meanwhile, typically detect policy violations after someone has broken the rules. By that time, notes Harry Labana, SVP and general manager of Proofpoint’s Digital Communications Governance business unit, data leaked by chatbot users and agents has probably become LLM training fodder.

“AI is turning software into an active participant in business communication,” Labana (pictured) says. “What that implies is that governance can’t happen only after the fact. It needs to happen upfront as well.”

Proofpoint’s recently released Nuclei Discovery & Archive Suite is designed to do precisely that, Labana says. Based on technology the vendor acquired last May, the system uses AI agents to spot potential instances of misconduct, insider risk, and AI misuse in real time and notify downstream security solutions capable of blocking it. The goal, according to Labana, is to let businesses capitalize on all of AI’s productivity-boosting power, but responsibly and without asking end users to flawlessly apply policies they don’t fully understand.

“We’re not here trying to stop AI,” he says, “but it’s got to be done in a way that protects you.”

That’s Teramind’s goal as well, Walter says. “Companies owe it to their customers to protect their credit cards,” she says, and multiple regulations require them to meet that obligation. Teramind AI Governance, released on the same day as Proofpoint’s Nuclei solution as it happens, is designed to help them do so by applying the company’s insider risk management expertise to a new use case.

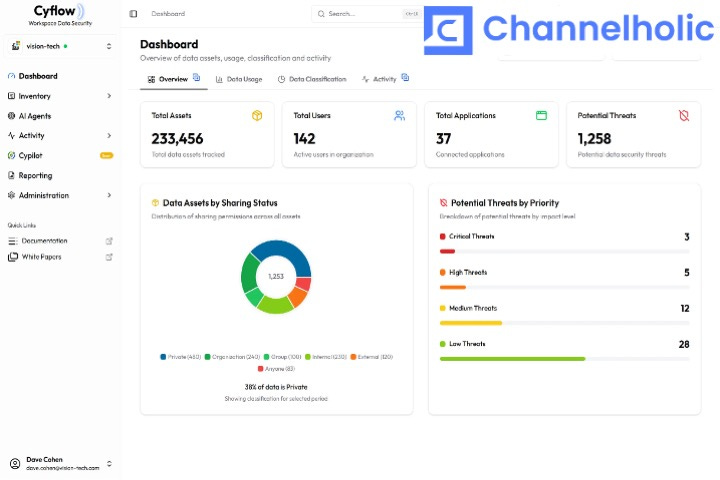

Teramind, however, is an enterprise-oriented company. MSPs need easy, automated, multi-tenant solutions optimized for use by IT providers with limited time, money, and security expertise. That’s where tooling starts becoming a legitimate excuse for MSPs ignoring AI governance, because there aren’t many systems like that. CultureAI has one, as does Kipling Secure (disclosure: Kipling is a client of the consultancy I serve as chief analyst). Cyflow offers a third.

“We’re monitoring everything that’s going on in the company,” says Amit Israel, Cyflow’s co-founder and CRO. “The main thing is that we can block it, meaning we’re not only monitoring,” he adds. “We can block that while in action and we can report that while in action.” Otherwise, Israel continues, an MSP with a few dozen clients could spend their entire day responding to AI security alerts. “It will be a nightmare.”

Though not, arguably, as big a nightmare as the one MSPs will suffer if they continue ignoring AI governance. End users know they’re seeing the tip of a much larger iceberg when it comes to LLM data leakage, Walter says. “They have no idea how quickly things can escalate, and everyone is kind of scrambling to figure out what companies can do to ensure they have those guardrails.”

MSPs really need to have an answer for them.

Over on The Business of Tech

Host Dave Sobel concurs about AI governance:

“So here is the fork for MSPs. Either the MSP becomes the provider that simplifies and governs the automation layer, packaging the controls, the guardrails, the identity, the monitoring, and the human handoffs as a managed service, or the MSP becomes the silent absorber of complexity, eating the coordination tax and exception handling as uncompensated work until margins give out.”

There’s a lot of interesting context for that statement, all waiting for you here.